It’s a bold opening line, and one that I’m going to heavily qualify, but migrating data from NAV to the cloud is easy.

However, the number of explanations & qualifications has lead this to be quite a lengthy post. If you want to dive straight in & follow the standard documentation, it can be found here with some useful FAQs here.

Once you’ve taken the time to make sure that you’ve migrated your customer to a standard Business Central on-premise instance, the full glory of replicating data from that instance to a cloud instance is just a few clicks away.

It’s also, surprisingly, documented very clearly, but like most BC documentation it can be hard to find via google (I’ve looked up the page 6 or 7 times now, and I still never find it on my first search term).

So what do we need to know?

Replication is achieved by utilising the ‘Intelligent Cloud’ which comes included with your Business Central SaaS tenant – even in a trial. In short, you connect an existing SQL database to Azure using a tool provided by Microsoft, and with a little configuration this will suck the data right out of your on-prem database & shoot it up into your cloud, leaving you with two systems that match exactly.

This has a few caveats though. It means that the two database schemas must match exactly. The exact same extensions must be on the cloud & on-prem, there must be absolutely no modifications in C/AL, and you must have installed the test tool on your on-prem database (but I’m sure you’ve done that anyway, who wouldn’t want the test tool?!).

Also, in the interest of full disclosure, I’ve had 2 weeks of support calls with MS between starting on this & having it working. I’ll detail this more in a later post, but I’m confident that it is possible to do this yourself, if the proper work is done up front. The bigger issues I’ve faced were bugs in the process and have now been resolved by the development team at MS.

It all starts, by migrating to Business Central on-premise. I’ll not go into detail about that in this already lengthy post (look for future updates!) but this is very similar to the NAV upgrade process we’ve been following for years. Convert the database, migrate the data, you’re on BC.

Once that’s completed, you’ll need to make sure the on-prem database has a compatibility level of 130, and (this is undocumented) enable change tracking on your SQL database tables. I’ve included a SQL script below that will do this. When data is replicated from your on-prem tenant to the cloud, it will only replicate data which has changed since the previous replication run; change tracking allows the system to know which data has changed & selectively replicate it.

You’ll then need to review which extensions are installed on-prem and in the SaaS environment. Of course, having followed the upgrade steps to the letter, you’ll have installed the extensions included in the BC install media, which includes the intelligent cloud stuff. There are several standard extensions which only exist for SaaS however, and you’ll need to remove those, as well as making sure any custom extensions which you’ve added to the on-prem tenant are also installed up in the cloud.

I cannot stress enough the importance of having the exact same extensions in both systems, as well as having absolutely no C/AL modifications. I’d also recommend making sure you have the same localisation for each version.

With that complete, you’re now prepared & ready to start the migration process in earnest.

Simply go to ‘Assisted Setup’ in your cloud environment and then select ‘Set up Intelligent Cloud’

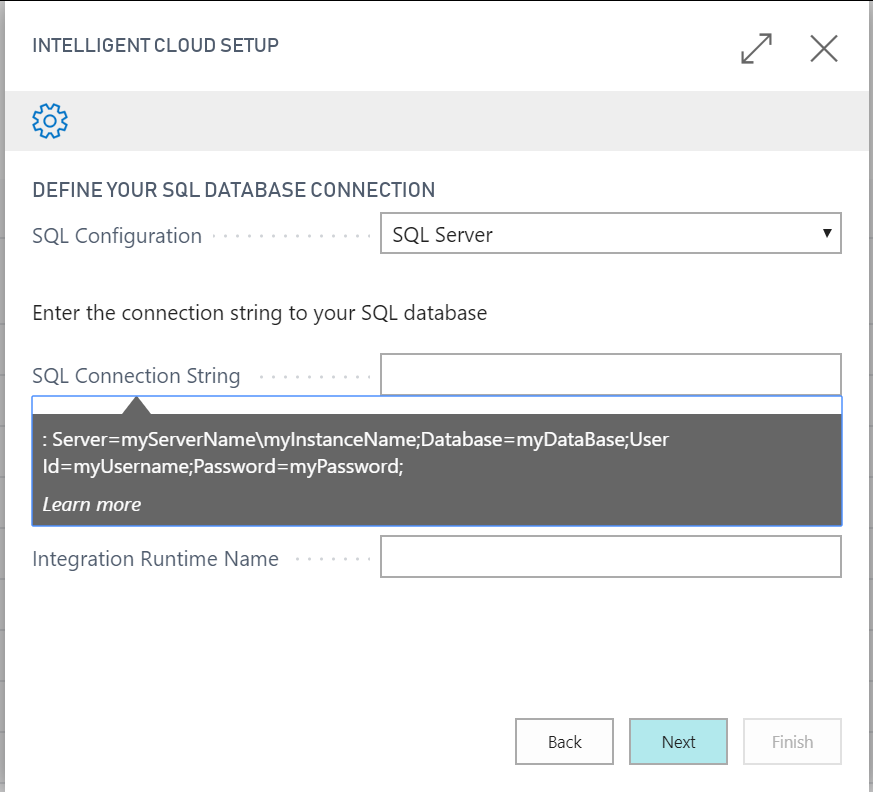

You’ll then be presented with a wizard. Agree to the legal stuff, and then on the next page use the lookup to select ‘Dynamics 365 Business Central’, then leave ‘SQL Configuration’ as SQL Server, give it a SQL connection string & if this is your first time setting up the intelligent cloud, also leave the ‘Integration Runtime Name’ blank.

If you click the ‘SQL Connection String’ label, you’ll get a handy tool tip with an example you can copy:

The UserID & Password need to be for a user account which uses SQL authentication, which has both read & modify permissions on your on-prem database. There are some stored procedures created in order to run this. We went with an account set as DBO, but I’m not a SQL admin so would advise you consider the security level you wish to give to this account for yourself.

Once you’ve pressed ‘Next’ you’ll be given some details of how to download & setup the ‘Self Hosted Integration Runtime’.

This is a tool which allows for the communication of data between Azure Data Factory & a on-premise SQL database.

It must be installed on a server which can connect to the SQL server; The SHIR can be reused for multiple connections, for different databases, and for purposes which are not BC related, so I’d recommend installing it somewhere central if you have multiple customers utilising the same SQL server, or multiple SQL servers on the same network.

After this, if your config is correct, you’ll get a list of companies to select from. If it’s not successful, you’ll get an error. You can diagnose this by going to the server where the SHIR is installed, and looking at the event viewer, under ‘Applications and Services Logs’ > ‘Connectors – Integration Runtime’

If things have gone wrong, there will likely be errors and warnings in there.

If things have gone successfully, you’ll be asked to setup a schedule. Do bare in mind that this can lock tables, so I’d suggest making it run at a quiet time.

Once the wizard is complete, you’ll find that your SaaS tenant now includes all the companies you selected to replicate (with no data in them yet), but that the SaaS environment has become effectively ‘read only’, with all user permissions dropped.

This is because the replication is one way. Once you’ve got all the data you want and you’re ready to move to SaaS as the main system, you simply disable the cloud replication, set your user permissions & enjoy the world of cloud.

The data will be migrated whenever you’ve scheduled it for, in my experience this took around an hour from the scheduled time to completion.

I’ll follow up with another post which details some of the issues I’ve faced after setup & how to manage your replication, as well as how to investigate & resolve issues I’ve faced.

The SQL Script to enable the change tracking is below:

DECLARE @ReplicatedTables AS TABLE (Id int, [Table Name] nvarchar(128))

INSERT INTO @ReplicatedTables

SELECT ROW_NUMBER() OVER (ORDER BY [Company Name], [Table Name]), [Table Name] FROM [Intelligent Cloud Status]

DECLARE @i int = 0, @modifiedCount int = 0

DECLARE @count int = (SELECT COUNT(*) FROM @ReplicatedTables)

WHILE @i < @count

BEGIN

SET @i += 1

DECLARE @TableName nvarchar(128)

SELECT @TableName = [Table Name] FROM @ReplicatedTables WHERE Id = @i

IF NOT EXISTS(SELECT TOP 1 1 FROM sys.change_tracking_tables WHERE object_id = OBJECT_ID(@TableName))

BEGIN

DECLARE @sql nvarchar(250)

SET @sql = 'ALTER TABLE ' + QUOTENAME(@TableName) + ' ENABLE CHANGE_TRACKING'

EXEC sp_executesql @sql

SET @modifiedCount += 1

END

END

SELECT 'Enabled change tracking on ' + CAST(@modifiedCount as nvarchar(12)) + ' tables.'

What was the size of the database that took about an hour

LikeLike

It’s currently around 6gb, but since starting this I removed 7 or 8 test companies that were not in use, and it’s still remained around the same amount of time

LikeLike

Hello, The SQL Script you provided. is it nexessary to launch it before using the Intelligent Cloud Extension.

And where did you the change tracking ?

LikeLike

Hello, The SQL Script you provided. is it nexessary to launch it before using the Intelligent Cloud Extension.

And where did you the change tracking ?

LikeLike

Hi, it was required at the time of writing but things have moved on since then, the last time I ran this it was not required – but this would depend on what version of nav/bc the source version was created in I think.

However, it can’t hurt to run it to be sure, and yes it is before using the assisted setup process.

LikeLike

Hi

Thanks for the quick feedback.

Where do you see the change tracking ? Or this is just a property to change to complete the process of migration ? (I understood that depends of the version).

I tried with a custom database and I have this error message with the status ‘Failed’

ErrorCode=SystemErrorOutOfMemory,’Type=Microsoft.DataTransfer.Common.Shared.HybridDeliveryException,Message=A task failed with out of memory.,Source=Microsoft.DataTransfer.TransferTask,”Type=System.OutOfMemoryException,Message=Exception of type ‘System.OutOfMemoryException’ was thrown.,Source=mscorlib,”Type=System.OutOfMemoryException,Message=Exception of type ‘System.OutOfMemoryException’ was thrown.,Source=mscorlib,”Type=System.OutOfMemoryException,Message=Exception of type ‘System.OutOfMemoryException’ was thrown.,Source=mscorlib,”Type=System.OutOfMemoryException,Message=Exception of type ‘System.OutOfMemoryException’ was thrown.,Source=mscorlib,’

Do you understand what happened ?

I cant find any stack trace or something else to identify the problem and fix.

I used the extension with a nav 2018 database

Thanks.

LikeLike